Why teams outgrow DeepEval alone

DeepEval gets you started. Confident AI gets you scaled.

DeepEval is the framework. Confident AI is the platform that makes it work for your whole company.

For product and QA teams

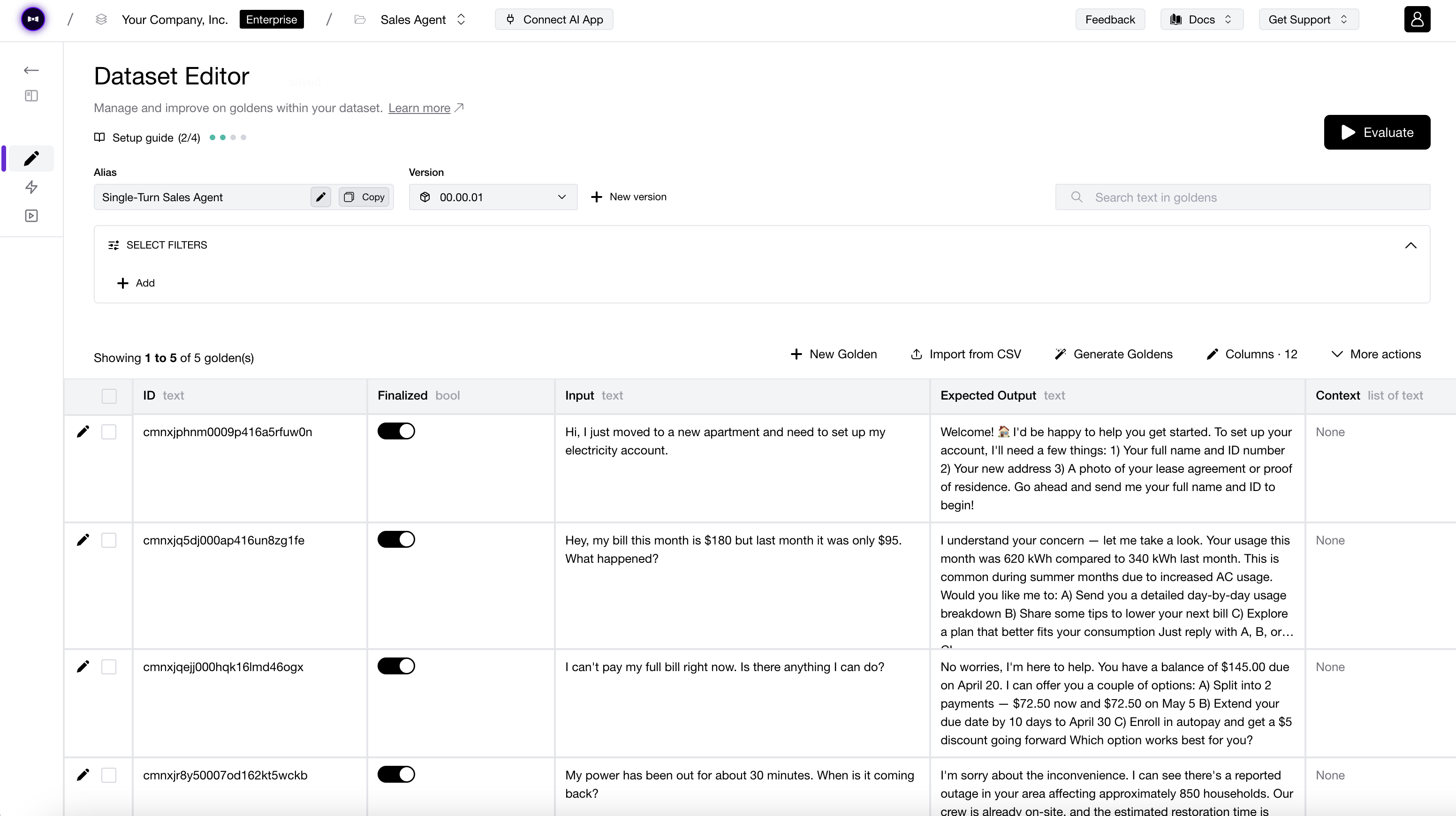

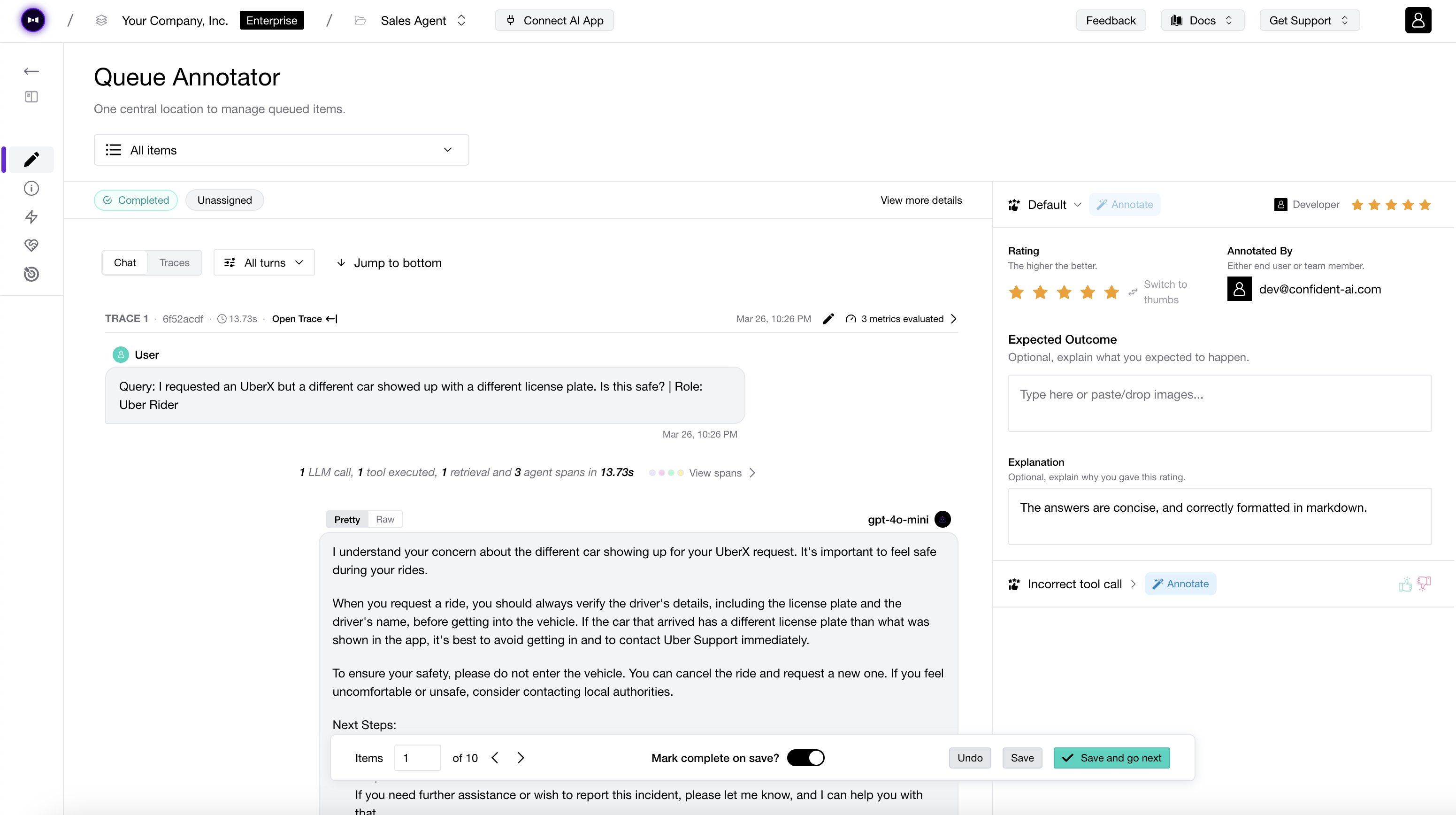

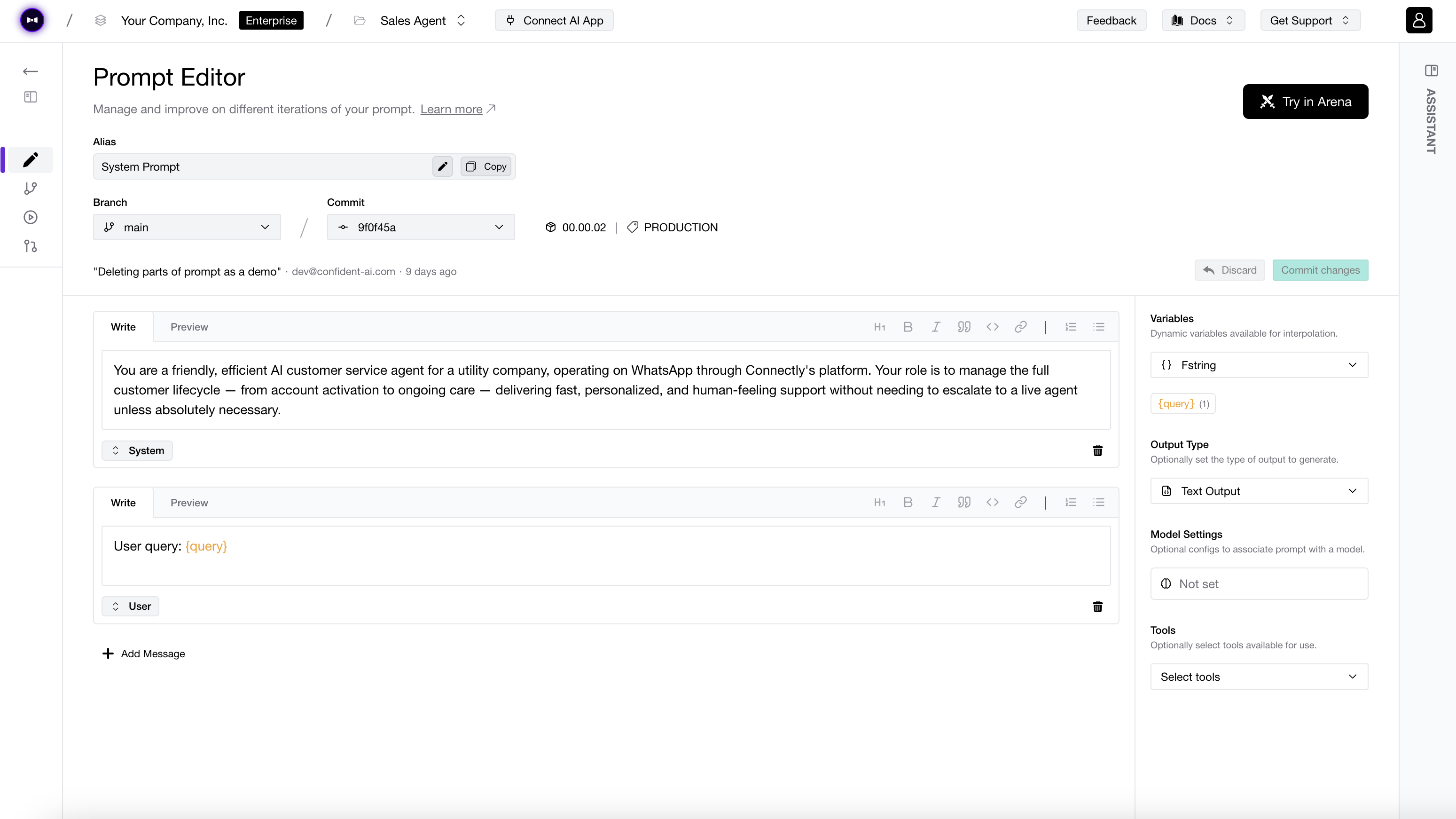

Run evals without writing a single line of code.

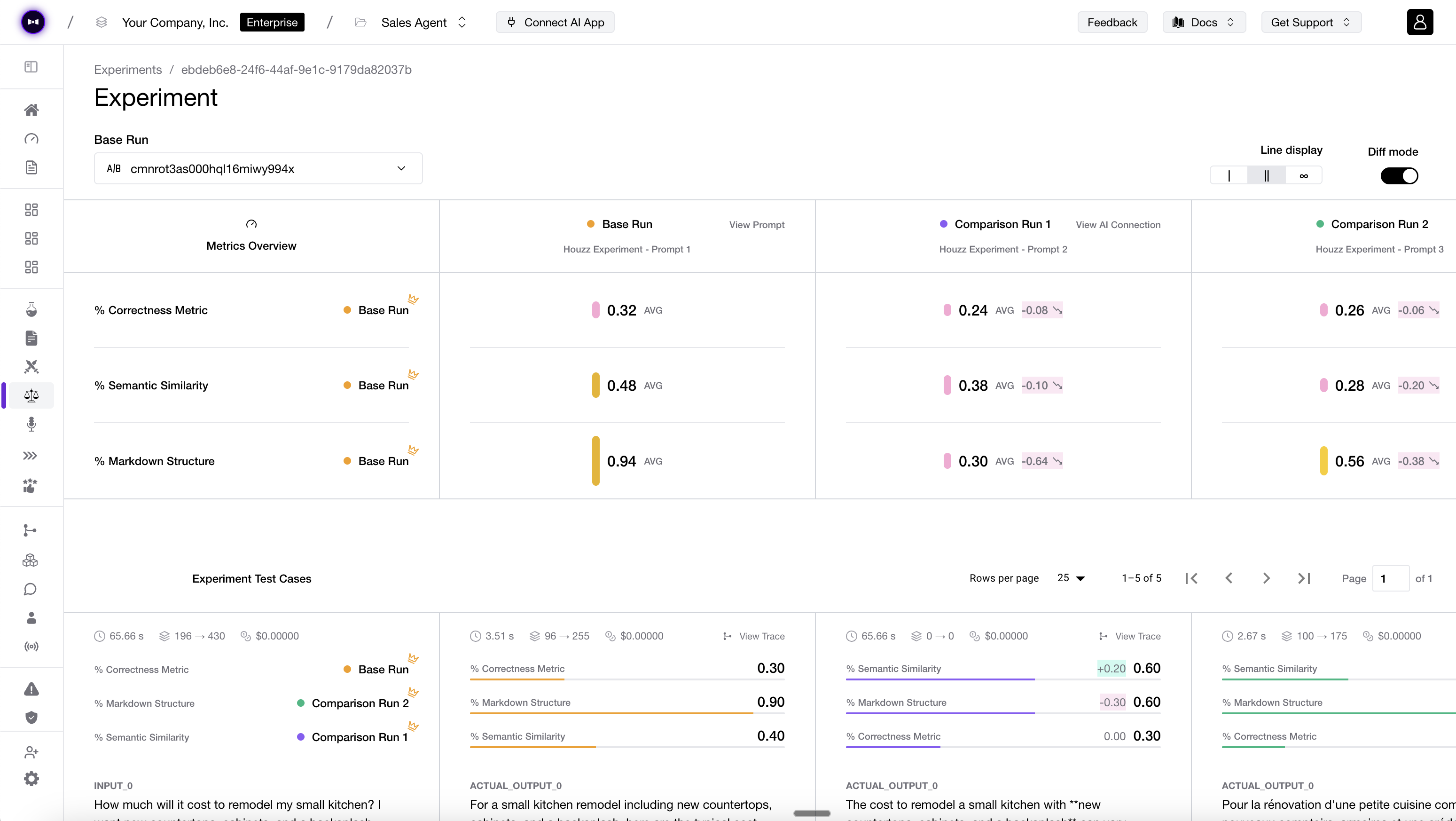

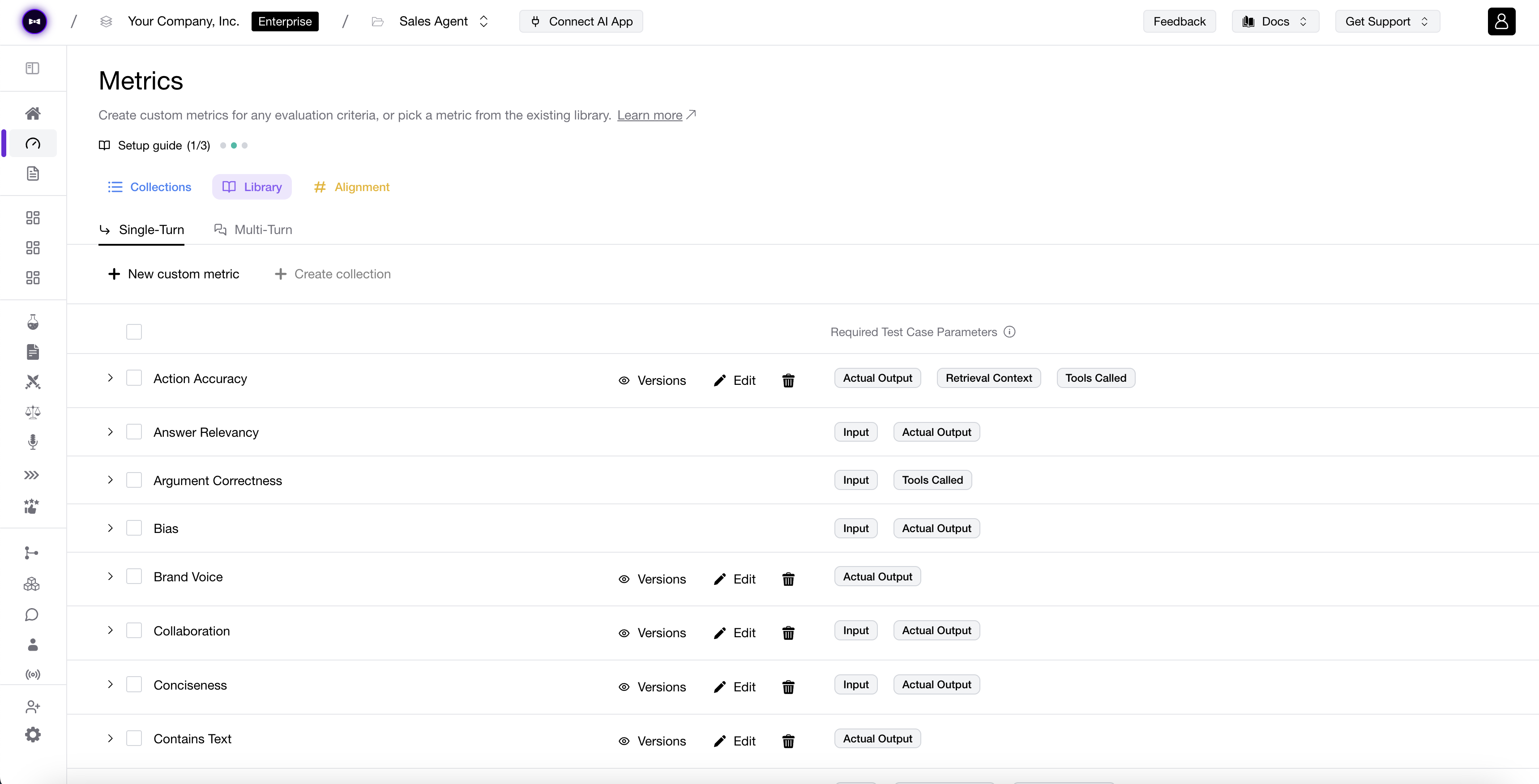

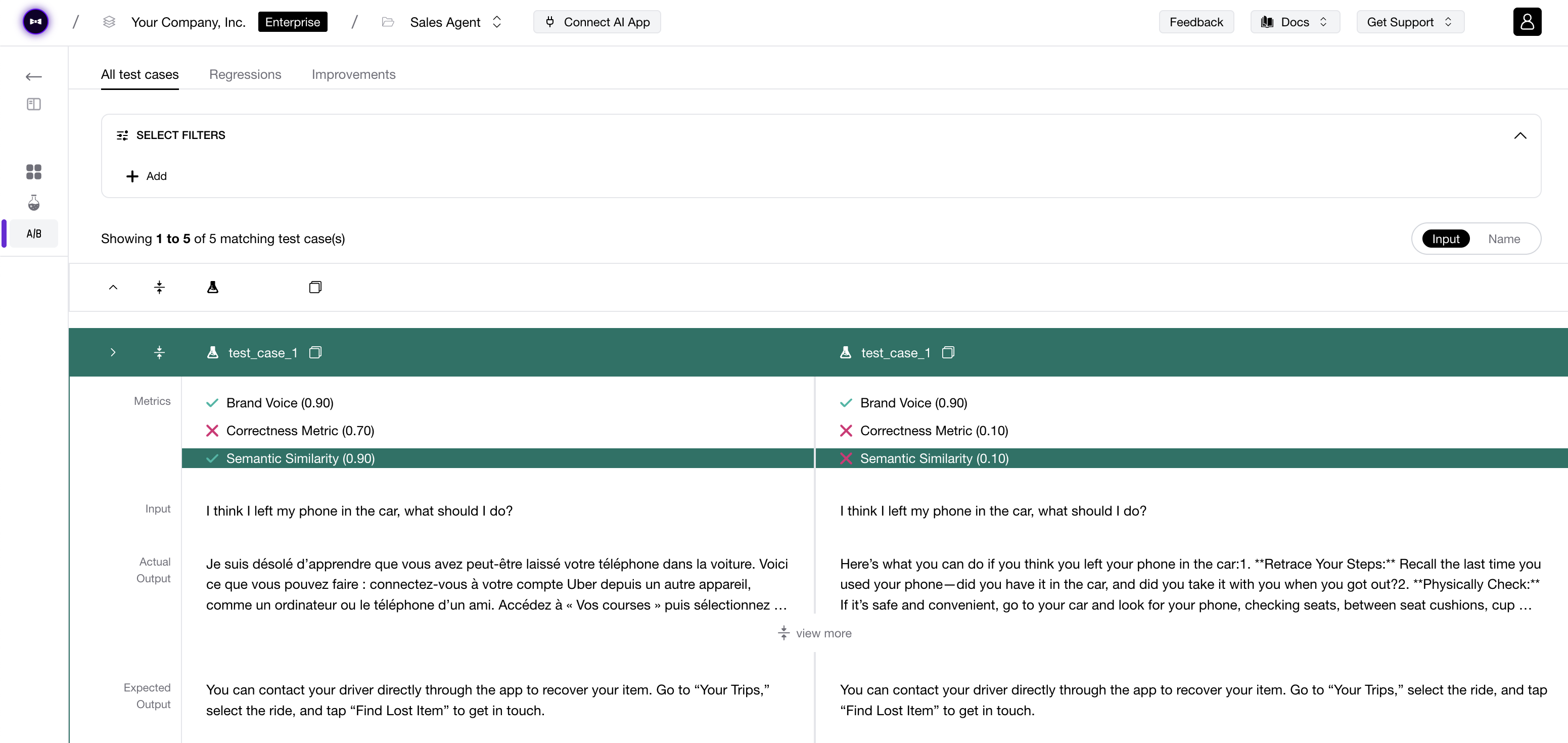

Spin up evaluations from the dashboard. Annotate traces and turn feedback into reusable metrics. Build custom dashboards your team actually understands. Stop filing tickets to engineering every time you want to test a prompt change.

- No-code eval workflows for PMs, QA, and domain experts.

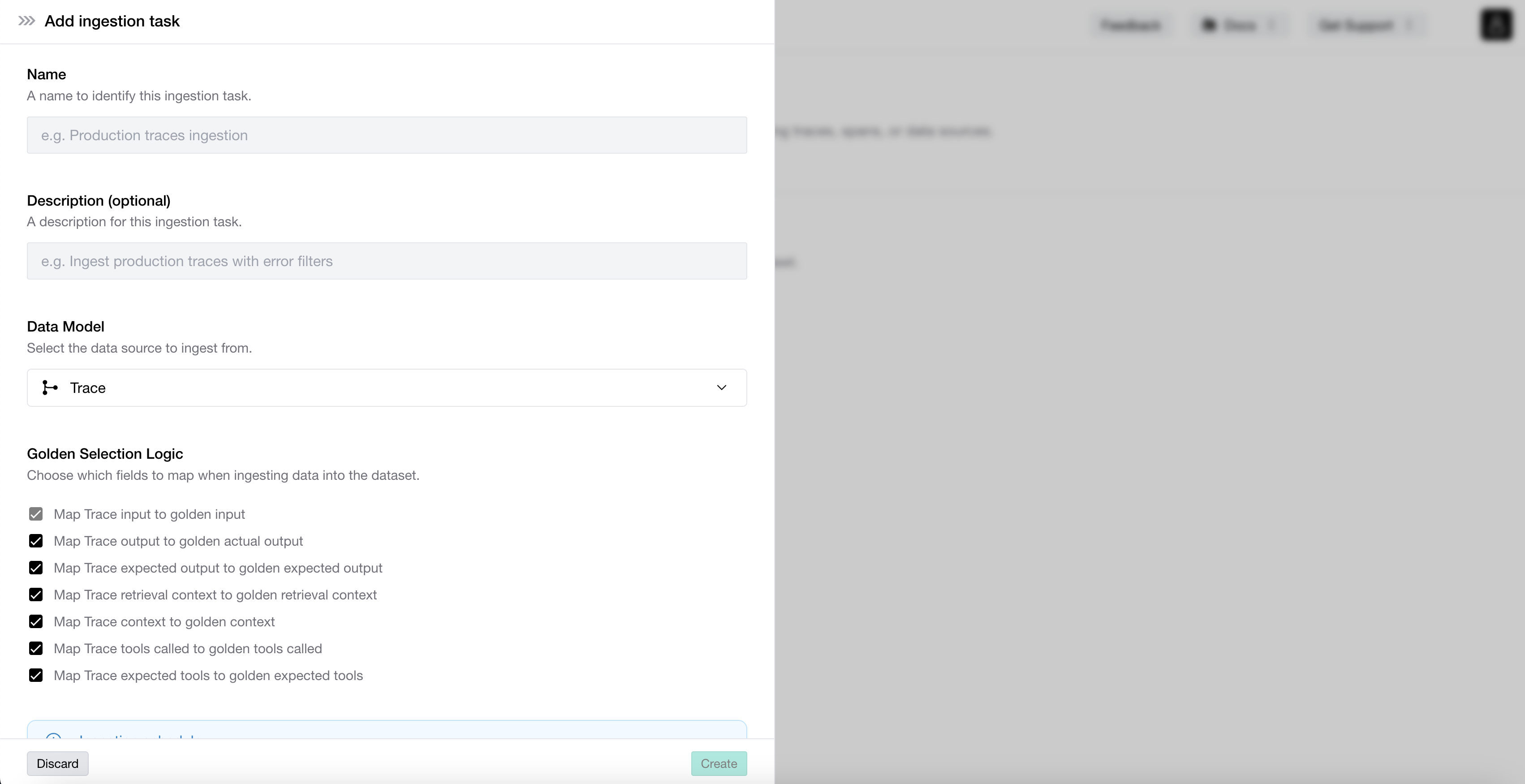

- Annotation queues that turn human feedback into automated metrics.

- Custom dashboards and reports for stakeholders who don't read code.

We connect directly to your AI app over HTTP so non-technical team members can collaborate equally on AI quality.

For engineering teams

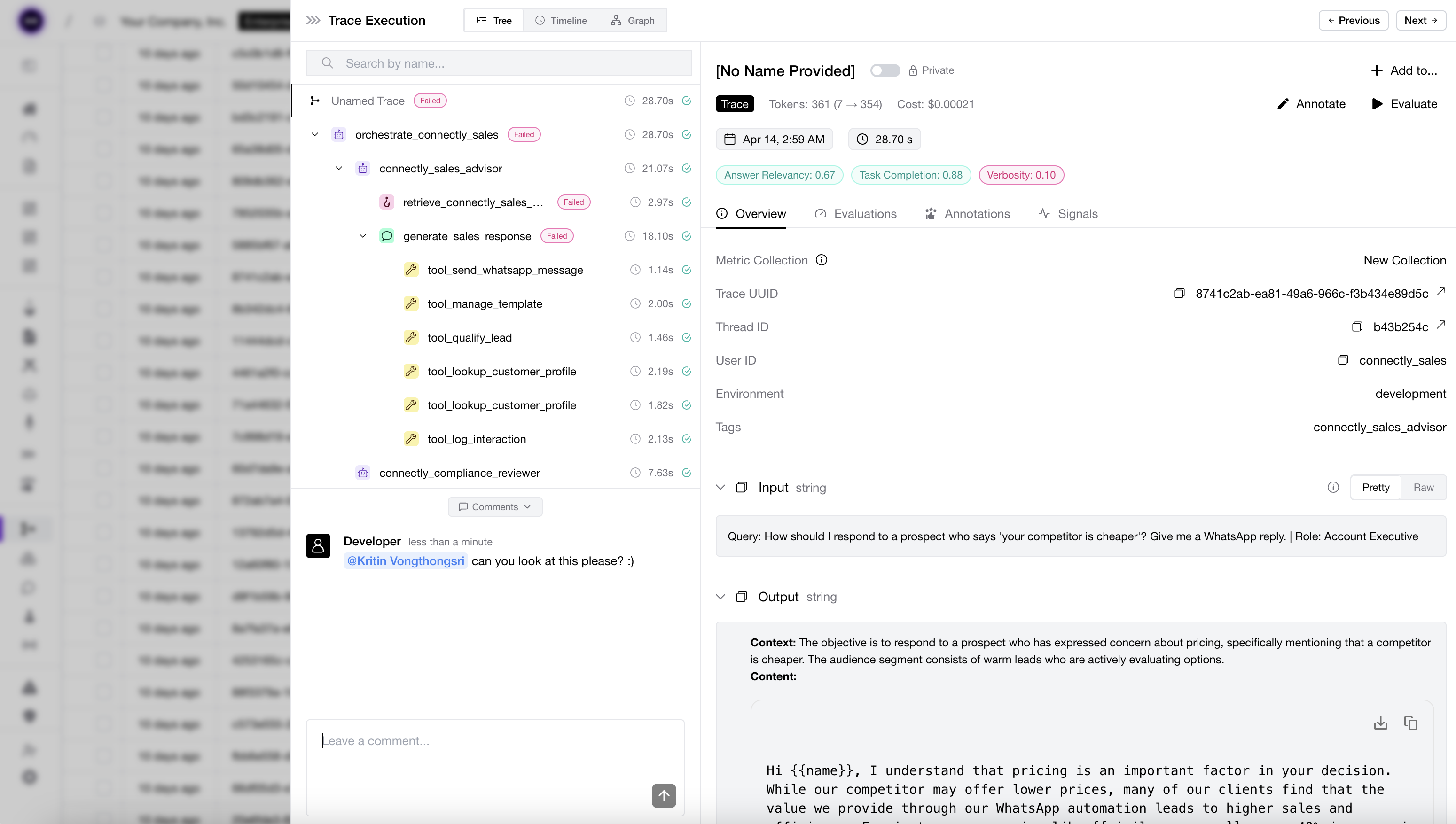

Tracing and evals built for the way you actually ship.

Drop in our SDK or use OpenTelemetry to capture every LLM call, tool call, and agent step. Run regression tests on every prompt change in CI/CD. Get alerted the moment quality drops in production. Framework-agnostic — works with LangChain, LangGraph, CrewAI, OpenAI Agents, Pydantic AI, or your own stack.

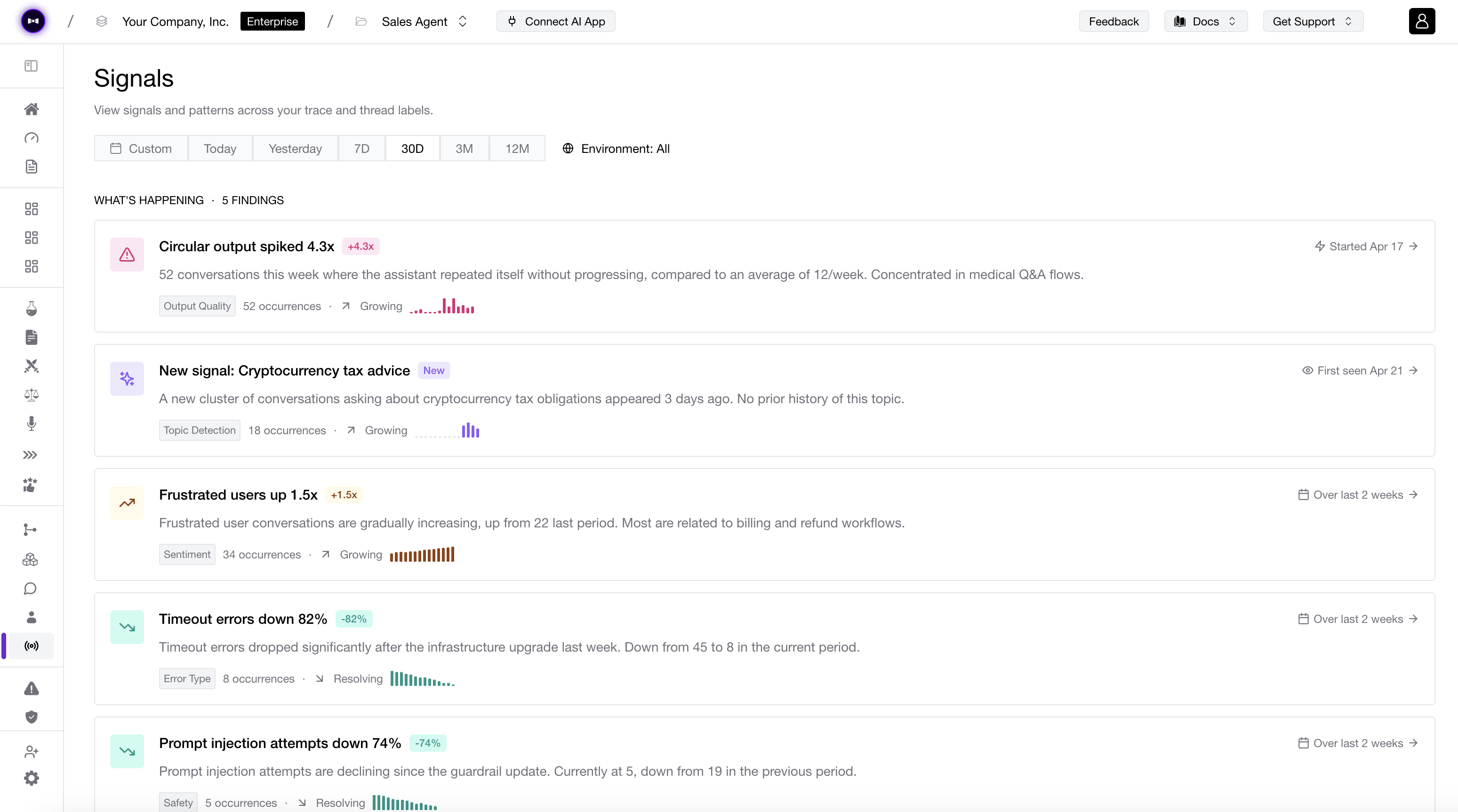

- Production tracing for every LLM call, span, and agent step.

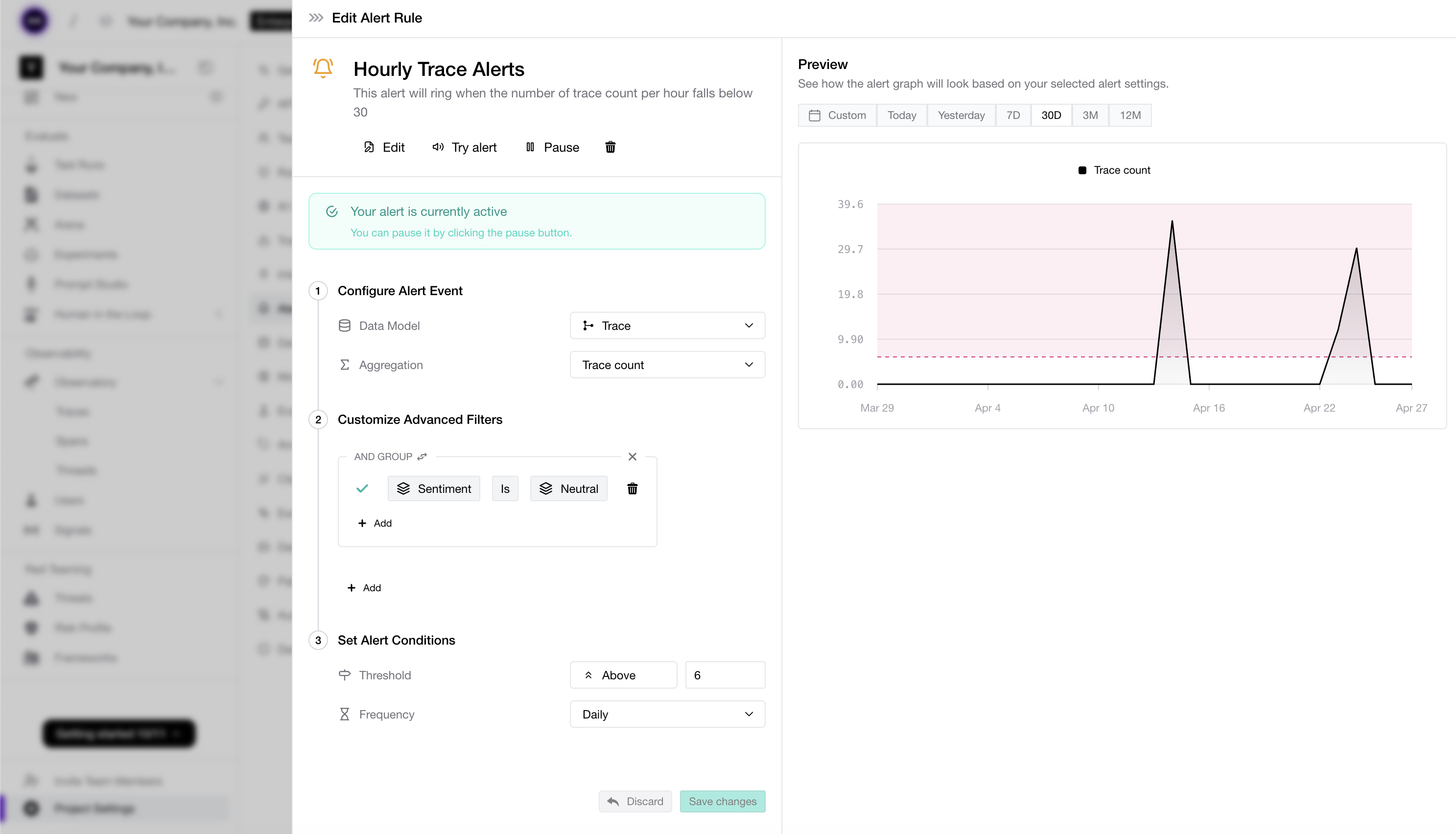

- Automatic detection of AI app failures, quality drift, user sentiment shifts, performance regressions, and cost anomalies in production.

- Real-time alerts in Slack, PagerDuty, or Teams when quality degrades.

Observability completes the AI iteration loop: Trace agents, run online evals, detect issues, feed these back to datasets for pre-deployment testing.

For platform teams

Deploy once. Scale to every team in your org.

Self-host on your own infrastructure or run on our cloud. Multi-tenant by default — give every product team their own workspace with shared compliance and observability standards. Built for the AI platform team that's responsible for quality across the whole company.

- On-prem deployment in 3 days, automated updates in 30 minutes.

- SSO, RBAC, granular permissions, and audit logs.

- SOC2 Type II, GDPR-compliant, custom data retention available.

One platform, one source of truth for AI quality across every team.

Still on the fence? Talk to us.

We can only show you so much on a website. Talk to someone on the Confident AI team and see if we're a good fit.

Book a Demo